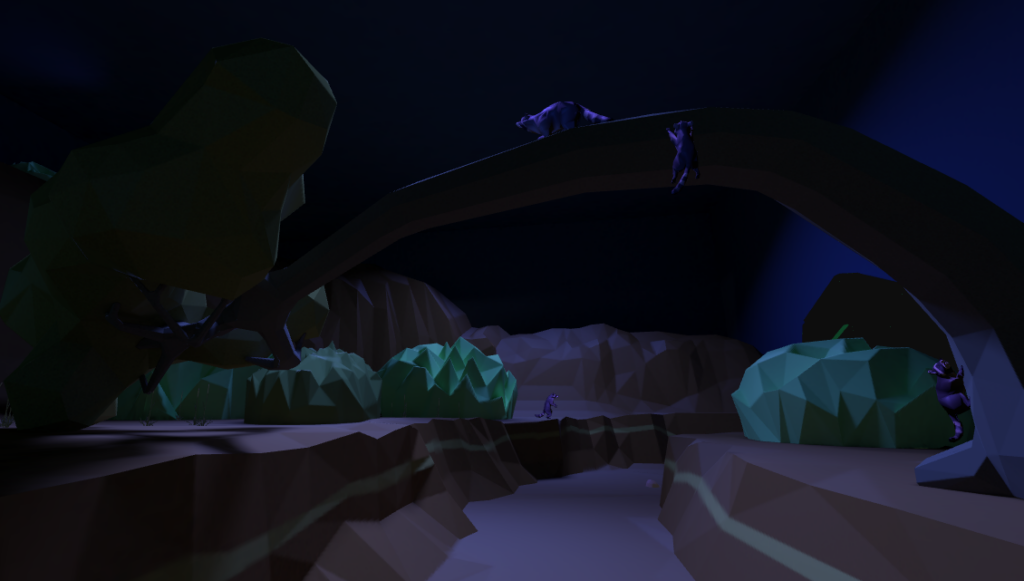

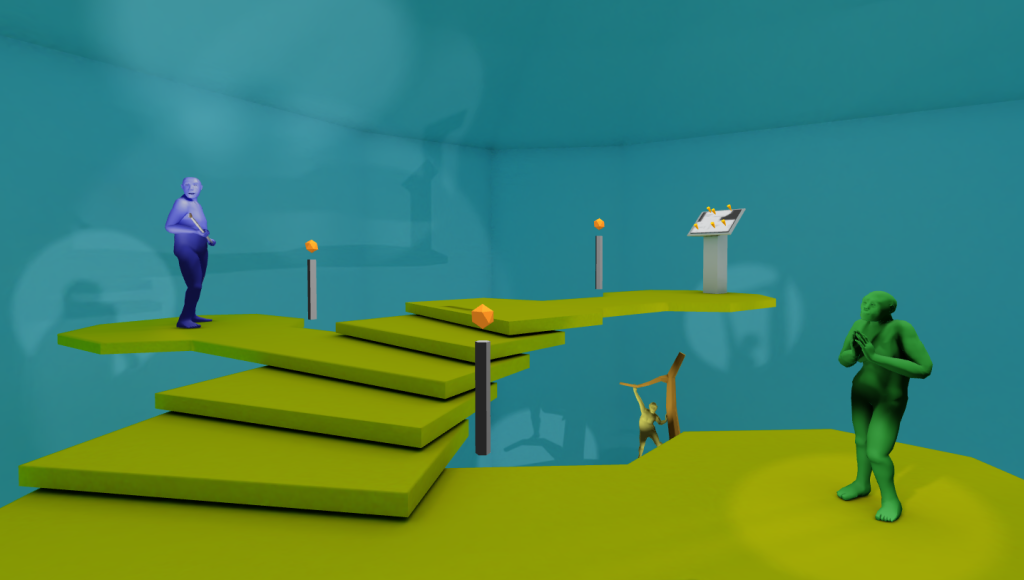

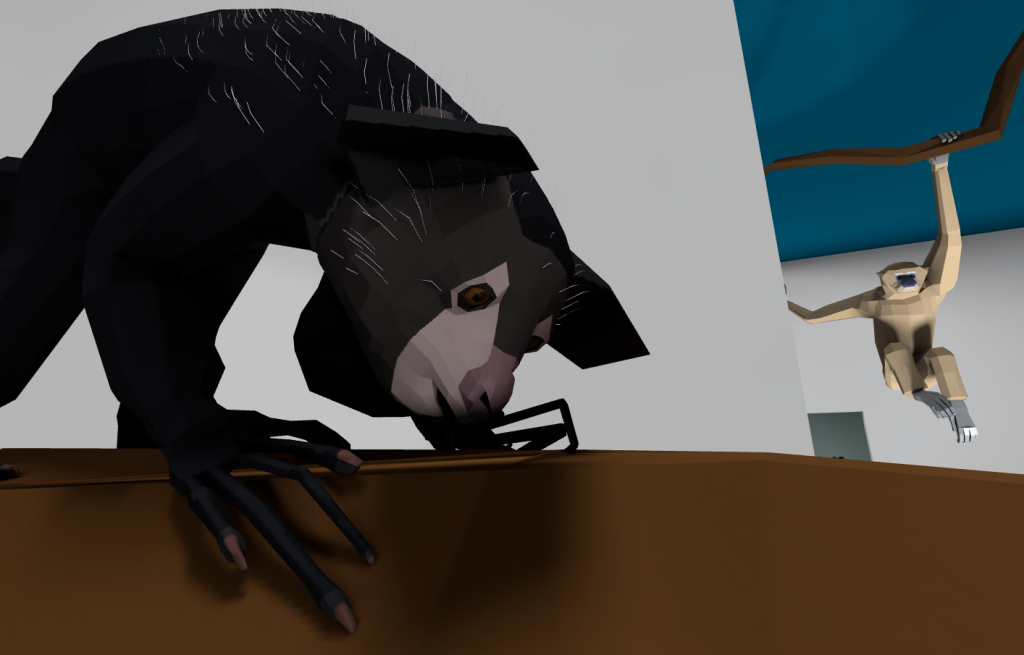

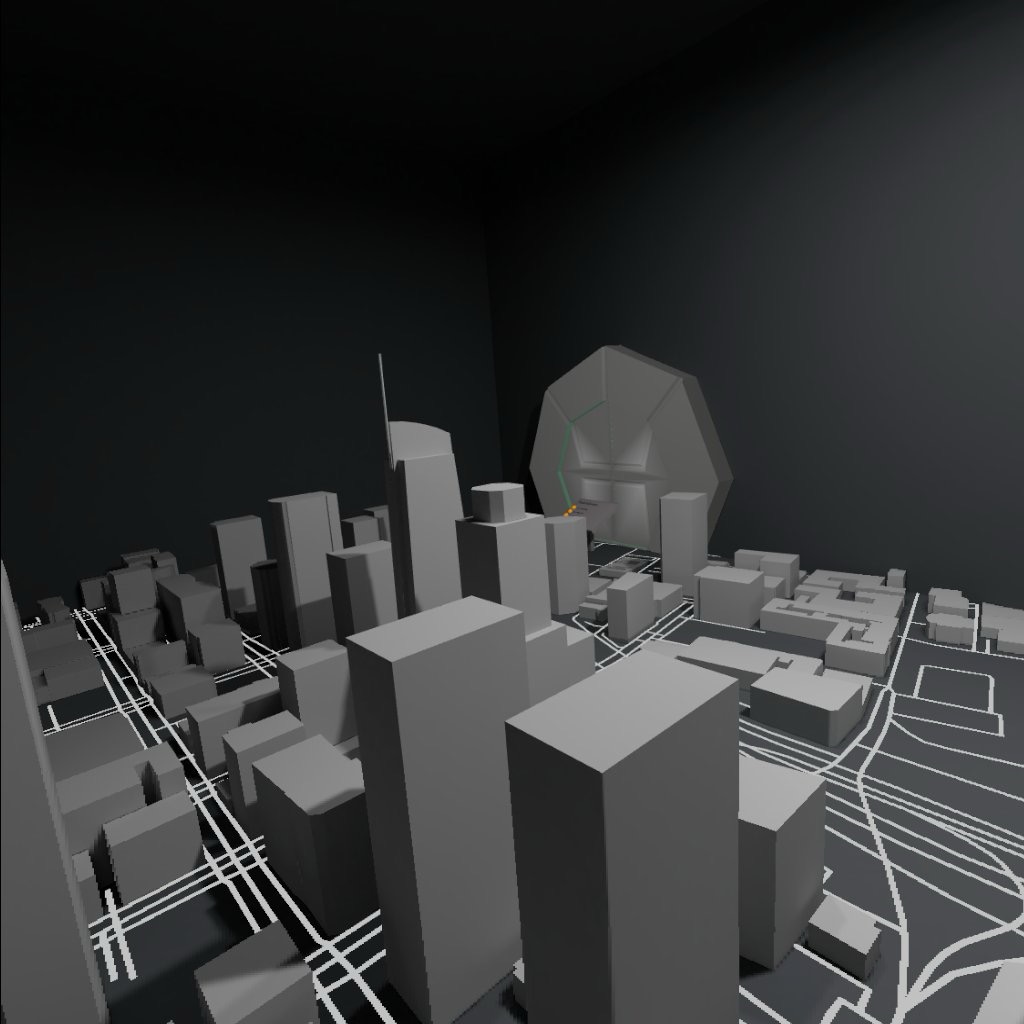

Added walk mode to allow free movement around the scenes. Want to spend more than 6 seconds with the shasta sloths? Perhaps actually see how their animations are randomized? Now you can commune with the sloths and everything else in the ride! Check it out at at www.paleoca.com!